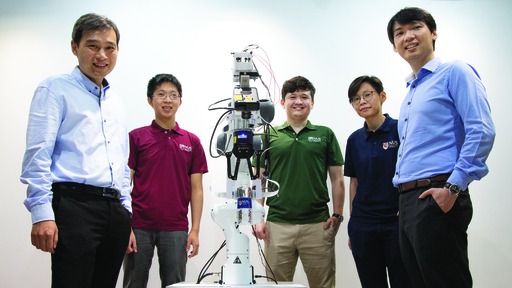

Photo Credit: National University of Singapore (NUS)

Two researchers from the National University of Singapore (NUS) who are members of the Intel Neuromorphic Research Community (INRC) – presented new findings demonstrating the promise of event-based vision and touch sensing in combination with Intel’s neuromorphic processing for robotics.

The work highlights how bringing a sense of touch to robotics can significantly improve capabilities and functionality compared to today’s visual-only systems and how neuromorphic processors can outperform traditional architectures in processing such sensory data.

The human sense of touch is sensitive enough to feel the difference between surfaces that differ by just a single layer of molecules, yet most of today’s robots operate solely on visual processing. Researchers at NUS hope to change this using their recently developed artificial skin, which according to their research can detect touch more than 1,000 times faster than the human sensory nervous system and identify the shape, texture and hardness of objects 10 times faster than the blink of an eye. Enabling a human-like sense of touch in robotics could significantly improve current functionality and even lead to new use cases.

Tapping on Intel’s Loihi neuromorphic chip, the team shows how bringing a sense of touch to robotics can significantly improve capabilities and functionality compared to today’s visual-only systems.

To break new ground in robotic perception, the NUS team began exploring the potential of neuromorphic technology to process sensory data from the artificial skin using Intel’s Loihi neuromorphic research chip. In their initial experiment, the researchers used a robotic hand-fitted with the artificial skin to read Braille, passing the tactile data to Loihi through the cloud to convert the micro bumps felt by the hand into a semantic meaning. Loihi achieved over 92% accuracy in classifying the Braille letters while using 20 times less power than a standard Von Neumann processor.

The team has implemented a chip that can draw accurate conclusions based on the skin’s sensory data in real-time, while operating at a power level efficient enough to be deployed directly inside the robot. Thus, this smart robot has an ultra-fast artificial skin sensor.

"Unique demonstration of an AI skin system with neuromorphic chips such as the Intel Loihi provides an artificial brain that can ultimately achieve perception and learning which is a major step forward towards power-efficiency and scalability.” said assistant professor Benjamin Tee from the NUS Department of Materials Science and Engineering and NUS Institute for Health Innovation & Technology.

Building on this work, the NUS team has successfully tasked a robot to classify various opaque containers holding differing amounts of liquid using sensory inputs from the artificial skin and an event-based camera.

Earlier this year, researchers from Intel Labs and Cornell University have jointly published a joint research paper in Nature Machine Intelligence, discussing the ability of Intel’s neuromorphic research chip, Loihi, to learn and recognize hazardous chemicals in the presence of significant noise and occlusion.